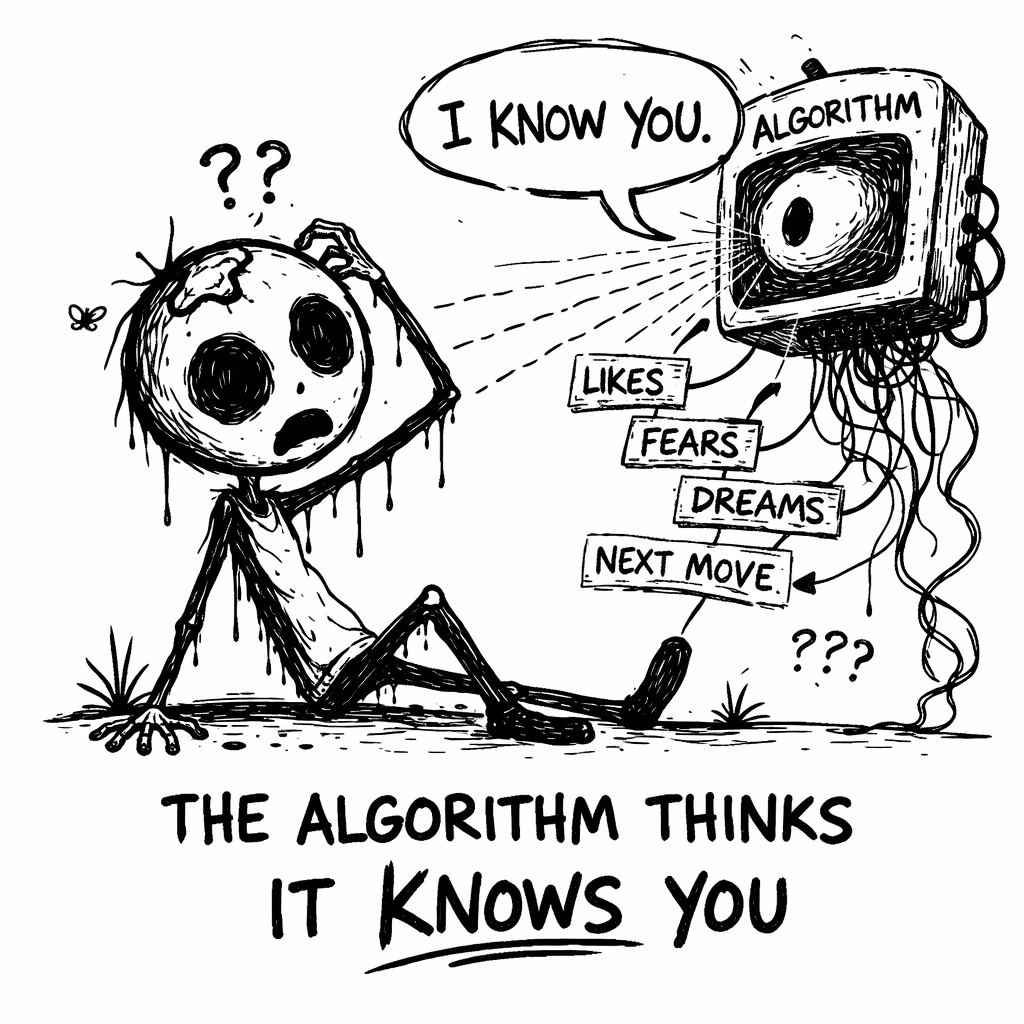

The Algorithm Thinks It Knows You.

There’s a quiet arrogance humming beneath your entire digital life, and it doesn’t belong to you. It belongs to a machine that has been watching you scroll, pause, hover, rewind, abandon, and linger, collecting those tiny movements like breadcrumbs and assembling them into a profile so detailed it starts to feel psychic. Over time, you begin to feel seen by it. When your feed surfaces the exact topic you were just thinking about, when it recommends the strangely specific joke that feels engineered for your sense of humor, or when it predicts the product you almost searched for but didn’t, the experience feels intimate. You start to wonder whether it understands you better than your friends do.

It doesn’t.

What it understands are patterns. It doesn’t recognize your longing; it measures your latency. It doesn’t comprehend your grief; it registers that you watched three videos about grief until the end and replayed one. The algorithm can’t tell whether you lingered on something because you were inspired, disturbed, confused, or quietly unraveling. It only knows that you stayed, and in its language, staying is desire. That translation error is where the illusion begins.

The algorithm doesn’t wake up in the morning curious about your inner life. It doesn’t care about your contradictions, your private revisions, or the tension between who you are and who you are becoming. It cares about probability and it builds a model of you based on repetition and reinforcement, and then it refines that model every time you move. The more accurately it predicts your next click, the more convincing it becomes. Prediction starts to feel like understanding, and convenience starts to feel like intimacy.

Data Is Not Identity

The algorithm assumes that repetition equals essence. If you click on something three times, it becomes a preference. If you pause on something twice, it becomes an interest. If you watch a certain category of content long enough, it becomes part of your personality profile. In that framework, you aren’t a dynamic human being but a cluster of weighted probabilities. However, repetition isn’t identity. Sometimes you click because you’re bored. Sometimes you click because you’re anxious. Sometimes you click because you’re trying to understand something you don’t even agree with. The machine can’t distinguish curiosity from insecurity or fascination from fear.

Yet it treats all of these behaviors as signals of who you are.

Once those signals accumulate, you’re sorted into categories. “Recommended for you” and “Because you watched” begin to define your informational environment. You are quietly grouped with “people like you,” even though you never consented to that classification and may not even recognize yourself in it. The algorithm reinforces the cluster by showing you more of what that group tends to consume, which increases the likelihood that you will continue behaving in ways consistent with the profile it has built.

The process feels efficient and even comforting, but it is also compressive. Over time, the range of content that reaches you narrows. Your feed becomes less surprising and more aligned with the digital silhouette constructed from your past behavior. Because the system rewards consistency, you begin to lean into the parts of yourself that generate predictable engagement. Interests that once felt exploratory start to harden into identity markers. You may tell yourself, “This is just who I am,” without realizing how much of that sense of self has been reinforced simply by exposure.

The algorithm doesn’t need to argue with you to shape you. It only needs to curate your environment. Humans adapt to their environments automatically, and when your digital environment repeatedly reflects a particular version of you back to yourself, that reflection starts to feel authoritative. Reinforcement masquerades as authenticity. Familiarity begins to feel like truth.

Prediction Is Not Understanding

There is something intoxicating about being predicted accurately. When the system anticipates your next interest before you consciously articulate it, it feels like magic. It feels as though the machine has peered into your mind and discovered something essential. In reality, prediction at scale is not intimacy; it’s statistical similarity. You resemble millions of other users who behaved in comparable ways under comparable conditions. That resemblance allows the algorithm to forecast your behavior with impressive precision, but it does not mean it understands your motivations.

Weather apps can predict rain without understanding clouds, and the algorithm can anticipate your next click without understanding you. It arranges the room around you rather than engaging in conversation with you. If your feed leans toward outrage, you begin to perceive the world as more volatile. If it leans toward hustle culture, you feel behind. If it leans toward fear, you grow cautious. The system doesn’t debate you; it adjusts the atmosphere and lets you draw conclusions inside it.

Because the algorithm rewards predictability, it subtly discourages contradiction. You’re a process, not a static profile. You change your mind in the shower. You reconsider beliefs after quiet conversations. You grow bored with interests that once defined you. You evolve in ways that are nonlinear and often invisible. The algorithm, however, prefers stability. It builds a snapshot of your past behavior and refreshes it incrementally, always anchored in what you have already done. When you begin to shift, the system lags behind, continuing to feed you the older version of yourself because that version is statistically safer.

That creates a quiet psychological drag. If you are trying to grow beyond certain patterns, you have to do so in an environment that keeps reflecting those patterns back to you. The content you are outgrowing continues to appear. The identity you are shedding remains recommended. It becomes easier to stay consistent than to transform, not because you lack courage, but because frictionless systems reward inertia.

The algorithm thinks it knows you because it mapped your historical behavior with extraordinary detail. It knows your dwell time, your scroll speed, and the headlines that make you hesitate. It doesn’t know your dissatisfaction, your emerging curiosity, or the internal tension building beneath your habits. It only sees what you’ve done, and then it projects that forward.

The danger isn’t that the machine understands you. The danger is that you start to understand yourself through the machine. You begin to describe your identity in terms of what shows up in your feed. You assume your digital reflection is comprehensive. You forget that you are more volatile, more contradictory, and more expansive than your consumption history suggests.

Joshua Palms isn’t anti-technology; it is anti-amnesia. It is a reminder that you are not reducible to your clickstream. You aren’t a marketing segment, a behavioral cluster, or a bundle of weighted preferences. You are a human being unfolding over time, shaped by experiences that can’t be tracked, measured, or predicted.

The algorithm thinks it knows you because it has never encountered your interior life. It has never seen you change your mind without posting about it. It has never witnessed the quiet decisions that redirect your trajectory. It has never experienced the version of you that does not behave in statistically convenient ways. You aren’t here to be predicted. You are here to evolve.

The system will continue refining its model because that is what it is built to do. The real question is whether you will continue refining yourself in ways that resist easy categorization. When you recognize that prediction isn’t the same as understanding, the illusion weakens. The recommendations lose their prophetic tone. The feed becomes less mystical and more mechanical.

The algorithm can simulate familiarity, but it can’t simulate freedom. Freedom begins when you stop mistaking accurate prediction for genuine insight and remember that you’re more than the data you leave behind. It thinks it knows you. The most subversive thing you can do is continue becoming someone it can’t easily model.